Questions about “robots after the humans are gone” have gotten me thinking more about what might eventually become of our human knowledge-verse, which in turn led me to various on-line discussions about the potential long-term advantages of archiving on paper. So now I’m thinking about that.

Certainly digital media possess vastly greater bandwidth than old-fashioned print. On the other hand, it is not clear that any given digital storage medium of today will be readable far in the future. In theory, future civilizations could examine our magnetic tapes, compact discs and flash storage devices, and reverse engineer them to find out what was going on way back in the twenty first century. But such a theory assumes that future civilizations will have a lot in common with our own.

In the event of some disruptive calamity, it is not clear that surviving humans will have digital computers, but it is highly likely that some existing languages will survive. In such circumstances, as civilization gradually pulls itself together, books will be continue to be useful.

But what about that limited bandwidth problem? A printed book will typically contain around 2000 to 3000 characters per page. If we really want to help those future generations get a good start, we might want to do better than that.

One on-line discussion I saw talked about using ink on paper to print in binary format — essentially using paper as a digital medium. If you print only 1’s and 0’s (as tiny dots and spaces), you can get about 500,000 bytes — equivalent to 500,000 characters — on one side of a single sheet of paper.

This is great, but it assumes that future humans will be able to decode binary. If those folks don’t have any computers, that might be a very big assumption indeed.

So I started wondering whether there might be some way to compromise, a way to retain some of the advantages of text (readability forever into the future, as long as language itself doesn’t die) while picking up some of the benefits of binary encoding (far higher storage density).

I came up with a text font that might do the trick. The Forever font is composed of little on-off patterns of printed dots, just like a binary encoding on paper. But unlike a binary pattern, it is directly readable by humans. The result is not quite as compact as binary encoding — it takes about twice as many bits. So rather than being able to fit 500,000 characters on a page, you would only be able to fit 250,000 characters.

Still, going from 2500 characters per page to 250,000 characters per page is not too shabby — it lets you replace every 100 pages with a single page. You could store an entire reference library in a single book.

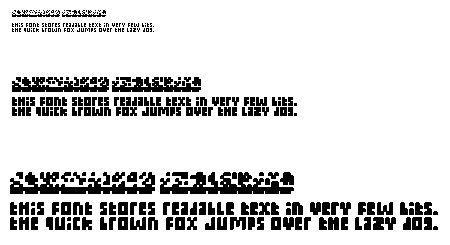

Below you can see the font at three different scales, together with the equivalent binary (ASCII) encoding. In each case, in the binary encoding the two lines of text are side-by-side, whereas in the Forever font they are arranged one below the other:

Each Forever font character is four dots high, and from one to five dots wide (the more frequent characters tend to be skinny). To achieve the full 250,000 characters per page, you would scale down the font so that each dot is as small as possible — about 1/300 of an inch is the limit for ink printed on ordinary paper. Of course you would need a strong magnifying glass to read print this small. So in practice, you’d start with large and easily readable text at the top of each page, and then gradually taper down the text size on subsequent lines. That way it would be clear to the reader that there is indeed text to be read in the tiny sized print.

One could object that future civilizations might not have access to a magnifying glass. On the other hand, it is reasonable to assume that the convex lens will be rediscovered well before the digital computer. Meanwhile, the need for a magnifier will be immediately self-evident to anyone who sees a size-tapered page of text in Forever font, and this need might even prompt some innovation.

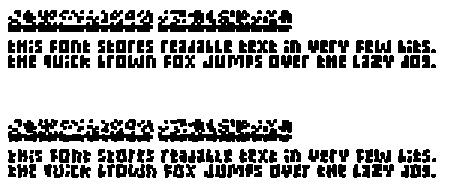

Another advantage of the Forever font over binary encoding is its robustness. Paper can be damaged, and printing at small sizes is inaccurate. If noise creeps in to the position of the dots (as shown in the lower text, below), the binary encoding becomes undecodable, whereas the Forever font is still readable:

The fact that the text appears as visually identifiable words and characters (which people are quite good at recognizing) — together with that extra factor of two in space — results in a far more robust and error-resistant encoding than you could get from the binary encoded version.

Have you seen this? Rosetta project by the Long Now Foundation: http://rosettaproject.org/ Basically, they’re etching text at 1000x reduction on metal disc.

This is great idea with strong execution. I wonder how machine-readable the text is in small sizes. If it’s still machine-readable, this would be a very interesting way to store information that needs to be physically accessible to both machines (OCR) and people. Are you planning on releasing this font?

This is a very clever idea and you should run with it.

A modern laser-printed page has better archival characteristics than the Domesday book. Most paper is now acid-free. It’s chemically stable cellulose that few creatures can eat. Laser toner is black plastic powder, heat-fused to the paper and thus waterproof and fadeproof. It’s not fireproof but nothing short of stone or metal is anyway. And it’s available in virtually unlimited quantities.

If you wanted to preserve the most important terabyte or two of our civilization’s legacy in a form that can survive and is accessible with little effort, you could do worse than a cargo container full of Xeroxes.

“One could object that future civilizations might not have access to a magnifying glass. On the other hand, it is reasonable to assume that the convex lens will be rediscovered well before the digital computer.”

You’re still assuming that future civilizations will be able to read and understand english of our times. If the technology and culture is lost, the language components and constructs will be lost as well..

I invite you to check out the Rosetta Disk project, which has done a lot of work in this area: http://rosettaproject.org/

I they don’t have computers, why bother with binary when you can just use microfiche? http://en.wikipedia.org/wiki/Microform

Yes, microfiche gets you a factor of 25, with the caveat that it requires the use of specialty equipment. And if you’re going to go the route of specialty equipment, there are quite a few alternatives (eg: photo-etching). Also, you need to keep microfilm in a dry environment, or it gets eaten away by fungus (film is more prone to this problem than is paper). By the way, the combination of the high resolution panchromatic film of microfiche and the Forever font could be intriguing. Rather than the 25 fold compression of microfiche, you’d get a 2500 fold compression. It would be an interesting thing to try.

This is great idea with strong execution. I wonder how machine-readable the text is in small sizes. If it’s still machine-readable, this would be a very interesting way to store information that needs to be physically accessible to both machines (OCR) and people. Are you planning on releasing this font?