An eccescope, like any eye-centric augmented reality device, doesn’t merely need to display an image into your eye. It also needs to make sure that this image lines up properly with the physical world around you. That requires tracking the position and orientation of your head with high speed and accuracy. Otherwise, the displayed image will appear to visually drift and swim within the world, rather than seeming like a part of your environment.

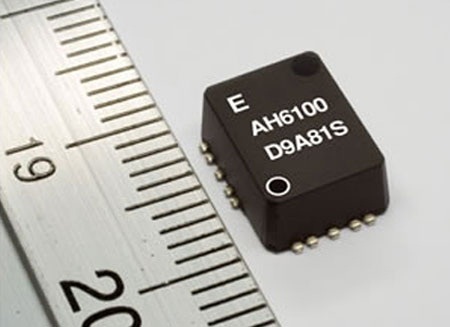

Fortunately, there are technologies today that are up to the job, although they have not yet been integrated together into one package. For high speed reliability and responsiveness, there are devices like the Epson Toyocom Inertial Measurement Unit (IMU). This device uses tiny electronic gyroscopes and accelerometers to measure rotation and movement fast and accurately. And it’s only 10 mm long (about 0.4 inches), which means it can fit unobtrusively on an earpiece:

But that’s not quite enough. Inertial sensors respond fast to head movements, but over time the measured angle will drift. The Epson Toyocom IMU has a drift of about six degrees per hour, which is very impressive, but still not good enough — an eccescope needs to have no significant angular drift over time.

Fortunately there are other technologies that give absolute measurements, even though they are not as fast. For example, a small video camera can look out into the environment and track features of the room around you (such as corners, where the edges of things meet). As you move your head, the camera tracks the apparent movement of these features.

The two types of information — fast but drift-prone inertial tracking and slow but drift-free video feature tracking — can be combined to give a very good answer to the question: “What is the exact position and orientation of my head right now?” And with good engineering, all of the required components can fit in an earpiece using today’s technology.

Once the computer that is generating the image knows exactly where your head is, it can place the virtual image in exactly the right place. To you, it will look as though the virtual object is residing in the physical world around you. If the data from the IMU is used properly, the position of this object will not seem to drift as you move your head.

There are other things we want the eccescope to know about in the world around us, such as the positions of our own hands and fingers. We’ll return to that later.

For by bowing motion sensor (3D accelerometer+gyro), I have to do a LOT of calibrating. If it’s for detecting the steady-state of the head (?) maybe the speed of the IMU doesn’t matter that much? The user can simply hold the head steady then maybe after 300ms or so tell it to “consider the head steady”? Is that not good enough, maybe…

The problem with any delay is that it creates a “swimming” effect for any virtual objects that are in your view. You can see this in the video by Marcus Tempest in Tokyo that I linked to in a previous post.